You may have come across an error code that lists the Freena 1 zfs error code. Well, there are several ways to fix this problem, so we’ll discuss them shortly.

Approved

1. Download ASR Pro

2. Open the program and select "Scan your computer"

3. Click "Repair" to start the repair process

The software to fix your PC is just a click away - download it now.

RAIDZ2 under 4x Western Digital WD40EFRX pinkish (originally all WD20EFRX, expanded from 2020) â

1x 512 GB Intel Optane L2ARC HBRPEKNX0202AH M.2 NVMe (replaces OWC Accelsior Mercury E2 PCIe SSD 2021) â €

1x 200GB Intel DC S3710 ZIL w / PLP (replaces 16GB Intel X-25-E SLC SSD 2021) â

Approved

The ASR Pro repair tool is the solution for a Windows PC that's running slowly, has registry issues, or is infected with malware. This powerful and easy-to-use tool can quickly diagnose and fix your PC, increasing performance, optimizing memory, and improving security in the process. Don't suffer from a sluggish computer any longer - try ASR Pro today!

Attached 16GB Kingston SNS4151S316G M.2 SSD means USB3 to M.2 adapter (replaces many failed Thumb 2018 PSUs) â

</p>

<ul>

<li><a aria-label="Share"></a></li>

<li><a href = "/ thread / specific-behavior-for-zfs-over-iscsi-on-freenas-vm-created-but-cant-be-start-because-lun-cant-be-achievements. 67867 / post- 304415 "># 1</a></li>

</ul>

</header>

<ul>

<li> I’ve rebuilt your own part of the network on a custom cluster and apparently switched everything to ZFS over iSCSI to work out LACP and Linux related interfaces if you plan to improve throughput and redundancy. </li>

<li> I am currently connecting a FreeNAS cluster via the ZFS-over-ISCSI patch from GrandWazoo https://github.com/TheGrandWazoo/freenas-proxmox </li>

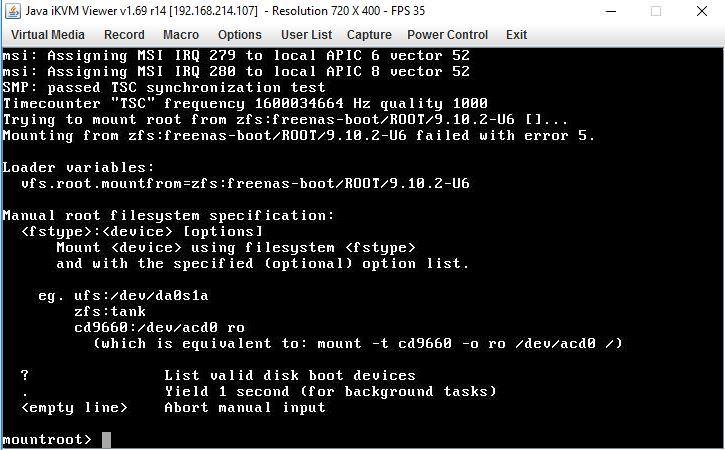

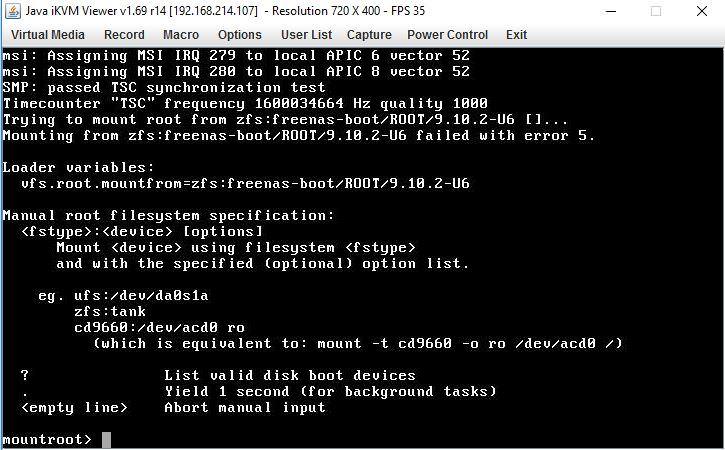

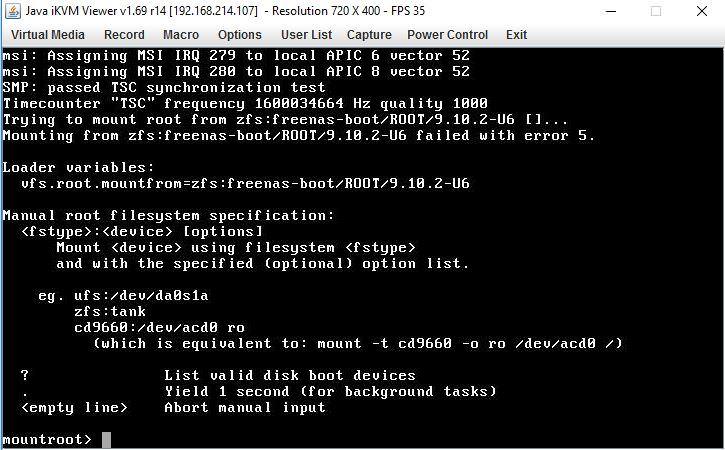

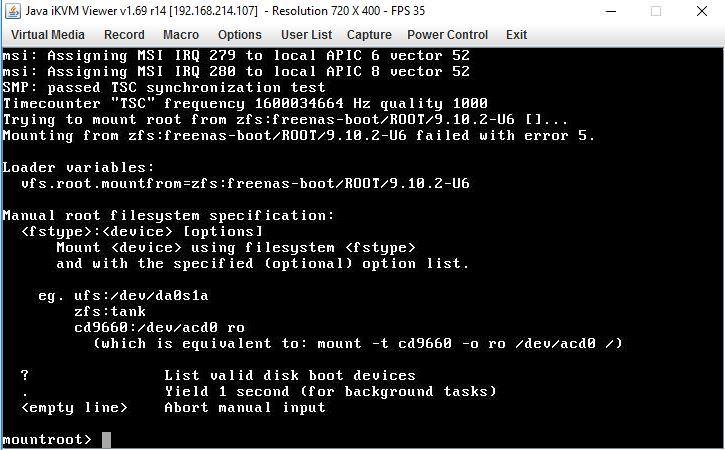

<li> When I completely changed marketing, I did have a virtual machine on a FreeNAS device. This device starts up without problems: </li>

<li> root @ proxmox1: ~ # qm start 100 <br />Ana’s sessionlisa again [sid: 8, target iqn: .target-1.com.freenas.ctl: training1, portal: 192.168.8.224,3260] <br />Rescan the training [sid: Target: 1, iqn.target-1.com.freenas.ctl: training1, portal: 192.168.8.224,3260] <br /> now I’m root @ proxmox1: ~ # </li>

<li> I am getting excellent throughput on 10GbE LACP NICs:

<p><img onerror="this.src='https://itnewstoday.net/wp-content/plugins/replace-broken-images/images/default.jpg'" loading = "lazy" src = "https://forum.proxmox.com/data/attachments/15/15773-f6a8ac36adfa0f967515f7b6b8bd80ae.jpg"> </p>

</li>

<li> The problem occurs when restarting a virtual machine that I have created. With this GUI from Proxmox I can create a virtual machine on a FreeNAS device without any problem (both existing and virtual devices appear in FreeNAS):

<p><img onerror="this.src='https://itnewstoday.net/wp-content/plugins/replace-broken-images/images/default.jpg'" loading = "lazy" src = "https://forum.proxmox.com/data/attachments/15/15774-7e10b5273f12febd9541514a4c2f35fb. </p>

</li>

</ul>

<p></p>

<ul>

<li> The jpg “> problem occurs when you try to start this VM, the LUN cannot be mounted (in this case ii, as highlighted):

<p>Rescan session [sid: 1, desire: iqn.target-1.com.freenas.ctl: training1, portal: 192.168.8.224,3260] <br />Rescan the session [sid: Target: 1, iqn.target-1.com.freenas.ctl: training1, portal: 192.168.8.224,3260] <br />kvm: -drive file = iscsi: //192.168.8.224/iqn.target-1.com.freenas.ctl: training1 / 2, if = none, id = drive-scsi0, format = raw, cache = none, aio = native, detect-zeroes = on: iSCSI error: LUN connection: SENSE KEY: ILLEGAL_REQUEST (5) ASCQ: LOGICAL_UNIT_NOT_SUPPORTED (0x2500) </p>

<p><iframe style="margin-top:20px; margin-bottom:20px; display: block; margin: 0 auto;" width="560" height="315" src="https://www.youtube.com/embed/et7JyacV_hA" frameborder="0" allow="accelerometer; autoplay; encrypted-media; gyroscope; picture-in-picture" allowfullscreen></iframe></p>

<p> TASK ERROR: Failed to start: QEMU exited with encoding 1 </p>

</li>

<li> The VM LUN, which usually already exists and continues to move smoothly, is 1. The separate VM LUN at 0 seemed to have the same problems as above. </li>

</ul>

<p><title></p>

<ul>

<li><a aria-label="Share"></a></li>

<li><a href = "/ thread / specific-behavior-for-zfs-over-iscsi-on-freenas-vm-created-but-cant-be-start-because-lun-cant-be-achievements. 67867 / post- 304652 "># 2</a></li>

</ul>

</header>

<p> I managed to find one. See below if you have similar problems: </p>

<ul>

<li> ISCSI service on my FreeNAS-11. Was 2-u8 initially had trouble restarting after reconfiguring the provider. This is because iSCSI and its pools were still connected to the previous network configuration. After updating the iSCSI collection <u> and </u> for new networks, it started up. </li>

<li> The ZFS pool on FreeNAS-11 my.2-U8 needed to be cleaned up after upgrading its networks. This was achieved (in FreeNAS) by going to Storage> Pools> [Gear by my pool]> Scrub (NOTE: ZFS rebuilt in the pool area)

<ul>

<li> With this factor, the raw disk sizes and file types for Proxmox 107 and one hundredSeven virtual machines were still available on FreeNAS. </li>

</ul>

</li>

</ul>

<p></p>

<ul>

<li> Then I restarted FreeNAS make, especially to keep things clean (this step was probably unnecessary, but I did it anyway). </li>

<li> When FreeNAS reappeared, I tried to restart VM 107 and 108 in Proxmox, but got a new error message:

<ul>

<li><quote>

<div><img onerror="this.src='https://itnewstoday.net/wp-content/plugins/replace-broken-images/images/default.jpg'" decoding="async" src="https://www.tecmint.com/wp-content/uploads/2014/11/Change-System-Information.png" style="margin-top:20px; margin-bottom:20px; display: block; margin: 0 auto;" alt="freenas error code 1 zfs"></p>

<p>TASK ERROR: lu_name zvol vm-107-disk-0 in /usr/share/perl5/PVE/Storage/ZFSPlugin.pm line 118 not found.</p>

</div>

</blockquote>

</li>

</ul>

</li>

<li> Because the error message states that the LUN name cannot be specified, FreeNAS has been checked. I figured out which areas and file types on the raw disk for virtual machines 107 and 108 are now gone (in my case that was fine. I actually tried to delete them because they were machines. In this case it was something. However you this interests, please note …) </li>

<li> I tried to delete virtual machines in Proxmox but got the same lu_name error message. </li>

<li> When I realized that the disks were missing, I just disabled the disk type in the Proxmox GUI while updating the VM config file. SectionThe decision was successful. </li>

<li> I was then able to remove the virtual machines from Proxmox without any problems. </li>

<li> I cannot create, start, delete, stop, etc. as virtual machines created by my FreeNAS LACP configuration. </li>

</ul>

<p> I would probably praise Proxmox again after this, as there are some good error messages that actually tell you what the problem is usually. You will have just enough thread to start pulling. Thanks ! </p>

<p>TASK ERROR: lu_name for zvol vm-107-disk-0 in /usr/share/perl5/PVE/Storage/ZFSPlugin.pm ray 118 could not be found.</p>

<p> </p>

<p> </p>

<a href="https://link.advancedsystemrepairpro.com/d7b96561?clickId=itnewstoday.net" target="_blank" rel="nofollow"> The software to fix your PC is just a click away - download it now. </a>

<p> </p>

<p> </p>

<p> </p>

<p><a href="https://itnewstoday.net/fr/comment-puis-je-resoudre-les-problemes-zfs-de-la-regle-derreur-1-de-freenas/" class="translate" hreflang="fr"><img onerror="this.src='https://itnewstoday.net/wp-content/plugins/replace-broken-images/images/default.jpg'" decoding="async" src="/wp-includes/images/flags/fr.png" width="40" height="30"></a> <a href="https://itnewstoday.net/sv/hur-kan-jag-losa-freenas-fel-med-datorkod-1-zfs-problem/" class="translate" hreflang="sv"><img onerror="this.src='https://itnewstoday.net/wp-content/plugins/replace-broken-images/images/default.jpg'" decoding="async" loading="lazy" src="/wp-includes/images/flags/sv.png" width="40" height="30"></a> <a href="https://itnewstoday.net/es/como-puedo-decidir-si-hay-problemas-con-el-codigo-de-error-1-de-freenas/" class="translate" hreflang="es"><img onerror="this.src='https://itnewstoday.net/wp-content/plugins/replace-broken-images/images/default.jpg'" decoding="async" loading="lazy" src="/wp-includes/images/flags/es.png" width="40" height="30"></a> <a href="https://itnewstoday.net/nl/hoe-kan-ik-problemen-met-freenas-foutcode-1-zfs-oplossen/" class="translate" hreflang="nl"><img onerror="this.src='https://itnewstoday.net/wp-content/plugins/replace-broken-images/images/default.jpg'" decoding="async" loading="lazy" src="/wp-includes/images/flags/nl.png" width="40" height="30"></a> <a href="https://itnewstoday.net/pt/como-posso-resolver-problemas-de-zfs-com-senha-de-erro-freenas-1/" class="translate" hreflang="pt"><img onerror="this.src='https://itnewstoday.net/wp-content/plugins/replace-broken-images/images/default.jpg'" decoding="async" loading="lazy" src="/wp-includes/images/flags/pt.png" width="40" height="30"></a> <a href="https://itnewstoday.net/ru/%d0%ba%d0%b0%d0%ba-%d0%be%d0%bf%d1%80%d0%b5%d0%b4%d0%b5%d0%bb%d0%b8%d1%82%d1%8c-%d0%bf%d1%80%d0%be%d0%b1%d0%bb%d0%b5%d0%bc%d1%8b-%d1%81-zfs-%d1%81-%d0%ba%d0%be%d0%b4%d0%be%d0%bc-%d0%be%d1%88%d0%b8/" class="translate" hreflang="ru"><img onerror="this.src='https://itnewstoday.net/wp-content/plugins/replace-broken-images/images/default.jpg'" decoding="async" loading="lazy" src="/wp-includes/images/flags/ru.png" width="40" height="30"></a> <a href="https://itnewstoday.net/pl/w-jaki-sposob-moge-rozwiazac-kod-bledu-freenas-w-przypadku-konkretnych-problemow-z-zfs/" class="translate" hreflang="pl"><img onerror="this.src='https://itnewstoday.net/wp-content/plugins/replace-broken-images/images/default.jpg'" decoding="async" loading="lazy" src="/wp-includes/images/flags/pl.png" width="40" height="30"></a> <a href="https://itnewstoday.net/ko/freenas-%ec%98%a4%eb%a5%98-%ec%bd%94%eb%93%9c-2%ea%b0%9c%ec%9d%98-zfs-%eb%ac%b8%ec%a0%9c%eb%a5%bc-%ec%96%b4%eb%96%bb%ea%b2%8c-%ed%99%95%ec%8b%a4%ed%9e%88-%ed%95%b4%ea%b2%b0%ed%95%a0-%ec%88%98/" class="translate" hreflang="ko"><img onerror="this.src='https://itnewstoday.net/wp-content/plugins/replace-broken-images/images/default.jpg'" decoding="async" loading="lazy" src="/wp-includes/images/flags/ko.png" width="40" height="30"></a> <a href="https://itnewstoday.net/it/come-posso-risolvere-i-problemi-con-il-codice-coupon-di-errore-1-zfs-di-freenas/" class="translate" hreflang="it"><img onerror="this.src='https://itnewstoday.net/wp-content/plugins/replace-broken-images/images/default.jpg'" decoding="async" loading="lazy" src="/wp-includes/images/flags/it.png" width="40" height="30"></a> <a href="https://itnewstoday.net/de/wie-behebe-ich-freenas-fehlercode-eine-person-zfs-probleme/" class="translate" hreflang="de"><img onerror="this.src='https://itnewstoday.net/wp-content/plugins/replace-broken-images/images/default.jpg'" decoding="async" loading="lazy" src="/wp-includes/images/flags/de.png" width="40" height="30"></a> </p>

<p> </p>

<div class="saboxplugin-wrap" itemtype="http://schema.org/Person" itemscope itemprop="author"><div class="saboxplugin-tab"><div class="saboxplugin-gravatar"><img src="https://itnewstoday.net/wp-content/uploads/zacharyanstey.jpg" width="100" height="100" alt="Zachary Anstey" itemprop="image"></div><div class="saboxplugin-authorname"><a href="https://itnewstoday.net/author/zacharyanstey/" class="vcard author" rel="author"><span class="fn">Zachary Anstey</span></a></div><div class="saboxplugin-desc"><div itemprop="description"></div></div><div class="clearfix"></div></div></div><div class='yarpp yarpp-related yarpp-related-website yarpp-template-thumbnails'>

<!-- YARPP Thumbnails -->

<h3>Related posts:</h3>

<div class="yarpp-thumbnails-horizontal">

<a class='yarpp-thumbnail' rel='norewrite' href='https://itnewstoday.net/en/blue-screen-error-code-124-windows-7/' title='How To Fix Error Code 124 Blue Screen Error Code In Windows 7?'>

<img width="150" height="150" src="https://itnewstoday.net/wp-content/uploads/2021/10/blue-screen-error-code-124-windows-7-150x150.jpg" class="attachment-thumbnail size-thumbnail wp-post-image" alt="" decoding="async" data-pin-nopin="true" srcset="https://itnewstoday.net/wp-content/uploads/2021/10/blue-screen-error-code-124-windows-7-150x150.jpg 150w, https://itnewstoday.net/wp-content/uploads/2021/10/blue-screen-error-code-124-windows-7-120x120.jpg 120w" sizes="(max-width: 150px) 100vw, 150px" /><span class="yarpp-thumbnail-title">How To Fix Error Code 124 Blue Screen Error Code In Windows 7?</span></a>

<a class='yarpp-thumbnail' rel='norewrite' href='https://itnewstoday.net/en/error-13508-replication/' title='Tips To Resolve Replication Error 13508'>

<img width="150" height="150" src="https://itnewstoday.net/wp-content/uploads/2021/10/error-13508-replication-150x150.jpg" class="attachment-thumbnail size-thumbnail wp-post-image" alt="" decoding="async" data-pin-nopin="true" srcset="https://itnewstoday.net/wp-content/uploads/2021/10/error-13508-replication-150x150.jpg 150w, https://itnewstoday.net/wp-content/uploads/2021/10/error-13508-replication-120x120.jpg 120w" sizes="(max-width: 150px) 100vw, 150px" /><span class="yarpp-thumbnail-title">Tips To Resolve Replication Error 13508</span></a>

<a class='yarpp-thumbnail' rel='norewrite' href='https://itnewstoday.net/en/ati-severe-zero-display-service-error/' title='Tips To Resolve A Serious Zero Reading Service Failure'>

<img width="150" height="150" src="https://itnewstoday.net/wp-content/uploads/2021/10/ati-severe-zero-display-service-error-150x150.png" class="attachment-thumbnail size-thumbnail wp-post-image" alt="" decoding="async" data-pin-nopin="true" srcset="https://itnewstoday.net/wp-content/uploads/2021/10/ati-severe-zero-display-service-error-150x150.png 150w, https://itnewstoday.net/wp-content/uploads/2021/10/ati-severe-zero-display-service-error-120x120.png 120w" sizes="(max-width: 150px) 100vw, 150px" /><span class="yarpp-thumbnail-title">Tips To Resolve A Serious Zero Reading Service Failure</span></a>

<a class='yarpp-thumbnail' rel='norewrite' href='https://itnewstoday.net/en/antivirus-for-windowsxp/' title='How To Fix Problems With Antivirus In Windows XP'>

<img width="150" height="150" src="https://itnewstoday.net/wp-content/uploads/2021/10/antivirus-for-windowsxp-150x150.jpg" class="attachment-thumbnail size-thumbnail wp-post-image" alt="" decoding="async" data-pin-nopin="true" srcset="https://itnewstoday.net/wp-content/uploads/2021/10/antivirus-for-windowsxp-150x150.jpg 150w, https://itnewstoday.net/wp-content/uploads/2021/10/antivirus-for-windowsxp-120x120.jpg 120w" sizes="(max-width: 150px) 100vw, 150px" /><span class="yarpp-thumbnail-title">How To Fix Problems With Antivirus In Windows XP</span></a>

</div>

</div>

<script>

function pinIt()

{

var e = document.createElement('script');

e.setAttribute('type','text/javascript');

e.setAttribute('charset','UTF-8');

e.setAttribute('src','https://assets.pinterest.com/js/pinmarklet.js?r='+Math.random()*99999999);

document.body.appendChild(e);

}

</script>

<div class="post-share">

<div class="post-share-icons cf">

<a href="https://www.facebook.com/sharer.php?u=https://itnewstoday.net/en/freenas-error-code-1-zfs/" class="link facebook" target="_blank" >

<i class="fab fa-facebook"></i></a>

<a href="http://twitter.com/share?url=https://itnewstoday.net/en/freenas-error-code-1-zfs/&text=How%20Can%20I%20Resolve%20Freenas%20Error%20Code%201%20Zfs%20Problems%3F" class="link twitter" target="_blank">

<i class="fab fa-twitter"></i></a>

<a href="mailto:?subject=How%20Can%20I%20Resolve%20Freenas%20Error%20Code%201%20Zfs%20Problems?&body=https://itnewstoday.net/en/freenas-error-code-1-zfs/" class="link email" target="_blank" >

<i class="fas fa-envelope"></i></a>

<a href="https://www.linkedin.com/sharing/share-offsite/?url=https://itnewstoday.net/en/freenas-error-code-1-zfs/&title=How%20Can%20I%20Resolve%20Freenas%20Error%20Code%201%20Zfs%20Problems%3F" class="link linkedin" target="_blank" >

<i class="fab fa-linkedin"></i></a>

<a href="https://telegram.me/share/url?url=https://itnewstoday.net/en/freenas-error-code-1-zfs/&text&title=How%20Can%20I%20Resolve%20Freenas%20Error%20Code%201%20Zfs%20Problems%3F" class="link telegram" target="_blank" >

<i class="fab fa-telegram"></i></a>

<a href="javascript:pinIt();" class="link pinterest"><i class="fab fa-pinterest"></i></a>

</div>

</div>

<div class="clearfix mb-3"></div>

<nav class="navigation post-navigation" aria-label="Posts">

<h2 class="screen-reader-text">Post navigation</h2>

<div class="nav-links"><div class="nav-previous"><a href="https://itnewstoday.net/en/error-de-activacion-de-windows-vista/" rel="prev">Tips For Troubleshooting Windows Vista Activation Errors <div class="fas fa-angle-double-right"></div><span></span></a></div><div class="nav-next"><a href="https://itnewstoday.net/en/error-13508-replication/" rel="next"><div class="fas fa-angle-double-left"></div><span></span> Tips To Resolve Replication Error 13508</a></div></div>

</nav> </article>

</div>

<div class="media mg-info-author-block">

<a class="mg-author-pic" href="https://itnewstoday.net/author/zacharyanstey/"><img alt='' src='https://itnewstoday.net/wp-content/uploads/zacharyanstey.jpg' srcset='https://itnewstoday.net/wp-content/uploads/zacharyanstey.jpg 2x' class='avatar avatar-150 photo avatar-default sab-custom-avatar' height='150' width='150' /></a>

<div class="media-body">

<h4 class="media-heading">By <a href ="https://itnewstoday.net/author/zacharyanstey/">Zachary Anstey</a></h4>

<p></p>

</div>

</div>

<div class="mg-featured-slider p-3 mb-4">

<!--Start mg-realated-slider -->

<div class="mg-sec-title">

<!-- mg-sec-title -->

<h4>Related Post</h4>

</div>

<!-- // mg-sec-title -->

<div class="row">

<!-- featured_post -->

<!-- blog -->

<div class="col-md-4">

<div class="mg-blog-post-3 minh back-img"

style="background-image: url('https://itnewstoday.net/wp-content/uploads/2022/05/pop-up-sprinkler-heads-troubleshooting.png');" >

<div class="mg-blog-inner">

<div class="mg-blog-category"> <a class="newsup-categories category-color-1" href="https://itnewstoday.net/category/en/" alt="View all posts in English">

English

</a> </div> <h4 class="title"> <a href="https://itnewstoday.net/en/pop-up-sprinkler-heads-troubleshooting/" title="Permalink to: Helps Solve Pop-up Sprinkler Head Repair Problems">

Helps Solve Pop-up Sprinkler Head Repair Problems</a>

</h4>

<div class="mg-blog-meta">

<span class="mg-blog-date"><i class="fas fa-clock"></i>

May 14, 2022</span>

<a href="https://itnewstoday.net/author/jakesteere/"> <i class="fas fa-user-circle"></i> Jake Steere</a>

</div>

</div>

</div>

</div>

<!-- blog -->

<!-- blog -->

<div class="col-md-4">

<div class="mg-blog-post-3 minh back-img"

style="background-image: url('https://itnewstoday.net/wp-content/uploads/2022/05/bivariate-kernel-estimation.png');" >

<div class="mg-blog-inner">

<div class="mg-blog-category"> <a class="newsup-categories category-color-1" href="https://itnewstoday.net/category/en/" alt="View all posts in English">

English

</a> </div> <h4 class="title"> <a href="https://itnewstoday.net/en/bivariate-kernel-estimation/" title="Permalink to: How To Solve Bivariate Kernel Estimate?">

How To Solve Bivariate Kernel Estimate?</a>

</h4>

<div class="mg-blog-meta">

<span class="mg-blog-date"><i class="fas fa-clock"></i>

May 14, 2022</span>

<a href="https://itnewstoday.net/author/isaacross-king/"> <i class="fas fa-user-circle"></i> Isaac Ross-King</a>

</div>

</div>

</div>

</div>

<!-- blog -->

<!-- blog -->

<div class="col-md-4">

<div class="mg-blog-post-3 minh back-img"

style="background-image: url('https://itnewstoday.net/wp-content/uploads/2022/05/erstellen-einer-vorlage-in-outlook.png');" >

<div class="mg-blog-inner">

<div class="mg-blog-category"> <a class="newsup-categories category-color-1" href="https://itnewstoday.net/category/en/" alt="View all posts in English">

English

</a> </div> <h4 class="title"> <a href="https://itnewstoday.net/en/erstellen-einer-vorlage-in-outlook/" title="Permalink to: Solution Tips Create A Template In Outlook">

Solution Tips Create A Template In Outlook</a>

</h4>

<div class="mg-blog-meta">

<span class="mg-blog-date"><i class="fas fa-clock"></i>

May 14, 2022</span>

<a href="https://itnewstoday.net/author/brandonhumphries/"> <i class="fas fa-user-circle"></i> Brandon Humphries</a>

</div>

</div>

</div>

</div>

<!-- blog -->

</div>

</div>

<!--End mg-realated-slider -->

</div>

<!--sidebar-->

<!--col-md-3-->

<aside class="col-md-3">

<aside id="secondary" class="widget-area" role="complementary">

<div id="sidebar-right" class="mg-sidebar">

<div id="search-2" class="mg-widget widget_search"><form role="search" method="get" id="searchform" action="https://itnewstoday.net/">

<div class="input-group">

<input type="search" class="form-control" placeholder="Search" value="" name="s" />

<span class="input-group-btn btn-default">

<button type="submit" class="btn"> <i class="fas fa-search"></i> </button>

</span> </div>

</form></div><div id="block-2" class="mg-widget widget_block"><ul class="wp-block-page-list"><li class="wp-block-pages-list__item"><a class="wp-block-pages-list__item__link" href="https://itnewstoday.net/contact-us/">Contact Us</a></li><li class="wp-block-pages-list__item"><a class="wp-block-pages-list__item__link" href="https://itnewstoday.net/privacy-policy/">Privacy Policy</a></li></ul></div> </div>

</aside><!-- #secondary -->

</aside>

<!--/col-md-3-->

<!--/sidebar-->

</div>

</div>

</main>

<div class="container-fluid mr-bot40 mg-posts-sec-inner">

<div class="missed-inner">

<div class="row">

<div class="col-md-12">

<div class="mg-sec-title">

<!-- mg-sec-title -->

<h4>You missed</h4>

</div>

</div>

<!--col-md-3-->

<div class="col-md-3 col-sm-6 pulse animated">

<div class="mg-blog-post-3 minh back-img"

style="background-image: url('https://itnewstoday.net/wp-content/uploads/2022/05/automatic-transmission-problem-troubleshooting-10-scaled.jpg');" >

<a class="link-div" href="https://itnewstoday.net/pl/jak-pomoc-rozwiazac-problemy-z-automatyczna-skrzynia-biegow/"></a>

<div class="mg-blog-inner">

<div class="mg-blog-category">

<a class="newsup-categories category-color-1" href="https://itnewstoday.net/category/pl/" alt="View all posts in Polish">

Polish

</a> </div>

<h4 class="title"> <a href="https://itnewstoday.net/pl/jak-pomoc-rozwiazac-problemy-z-automatyczna-skrzynia-biegow/" title="Permalink to: Jak Pomóc Rozwiązać Problemy Z Automatyczną Skrzynią Biegów?"> Jak Pomóc Rozwiązać Problemy Z Automatyczną Skrzynią Biegów?</a> </h4>

<div class="mg-blog-meta">

<span class="mg-blog-date"><i class="fas fa-clock"></i>

<a href="https://itnewstoday.net/2022/05/">

May 14, 2022</a></span>

<a class="auth" href="https://itnewstoday.net/author/charlessledge/"><i class="fas fa-user-circle"></i>

Charles Sledge</a>

</div>

</div>

</div>

</div>

<!--/col-md-3-->

<!--col-md-3-->

<div class="col-md-3 col-sm-6 pulse animated">

<div class="mg-blog-post-3 minh back-img"

style="background-image: url('https://itnewstoday.net/wp-content/uploads/2022/05/win32-meredrop-10.jpg');" >

<a class="link-div" href="https://itnewstoday.net/pl/wskazowki-dotyczace-rozwiazania-win32-merdrop/"></a>

<div class="mg-blog-inner">

<div class="mg-blog-category">

<a class="newsup-categories category-color-1" href="https://itnewstoday.net/category/pl/" alt="View all posts in Polish">

Polish

</a> </div>

<h4 class="title"> <a href="https://itnewstoday.net/pl/wskazowki-dotyczace-rozwiazania-win32-merdrop/" title="Permalink to: Wskazówki Dotyczące Rozwiązania Win32/merdrop"> Wskazówki Dotyczące Rozwiązania Win32/merdrop</a> </h4>

<div class="mg-blog-meta">

<span class="mg-blog-date"><i class="fas fa-clock"></i>

<a href="https://itnewstoday.net/2022/05/">

May 14, 2022</a></span>

<a class="auth" href="https://itnewstoday.net/author/jeffreymoor/"><i class="fas fa-user-circle"></i>

Jeffrey Moor</a>

</div>

</div>

</div>

</div>

<!--/col-md-3-->

<!--col-md-3-->

<div class="col-md-3 col-sm-6 pulse animated">

<div class="mg-blog-post-3 minh back-img"

style="background-image: url('https://itnewstoday.net/wp-content/uploads/2022/05/pop-up-sprinkler-heads-troubleshooting-10.png');" >

<a class="link-div" href="https://itnewstoday.net/ko/%ed%8c%9d%ec%97%85-%ec%8a%a4%ed%94%84%eb%a7%81%ed%81%b4%eb%9f%ac-%ed%97%a4%eb%93%9c-%ec%88%98%eb%a6%ac-%eb%ac%b8%ec%a0%9c%eb%a5%bc-%ed%95%b4%ea%b2%b0%ed%95%98%eb%8a%94-%eb%8d%b0-%eb%8f%84%ec%9b%80/"></a>

<div class="mg-blog-inner">

<div class="mg-blog-category">

<a class="newsup-categories category-color-1" href="https://itnewstoday.net/category/ko/" alt="View all posts in Korean">

Korean

</a> </div>

<h4 class="title"> <a href="https://itnewstoday.net/ko/%ed%8c%9d%ec%97%85-%ec%8a%a4%ed%94%84%eb%a7%81%ed%81%b4%eb%9f%ac-%ed%97%a4%eb%93%9c-%ec%88%98%eb%a6%ac-%eb%ac%b8%ec%a0%9c%eb%a5%bc-%ed%95%b4%ea%b2%b0%ed%95%98%eb%8a%94-%eb%8d%b0-%eb%8f%84%ec%9b%80/" title="Permalink to: 팝업 스프링클러 헤드 수리 문제를 해결하는 데 도움이 됩니다."> 팝업 스프링클러 헤드 수리 문제를 해결하는 데 도움이 됩니다.</a> </h4>

<div class="mg-blog-meta">

<span class="mg-blog-date"><i class="fas fa-clock"></i>

<a href="https://itnewstoday.net/2022/05/">

May 14, 2022</a></span>

<a class="auth" href="https://itnewstoday.net/author/charlessledge/"><i class="fas fa-user-circle"></i>

Charles Sledge</a>

</div>

</div>

</div>

</div>

<!--/col-md-3-->

<!--col-md-3-->

<div class="col-md-3 col-sm-6 pulse animated">

<div class="mg-blog-post-3 minh back-img"

style="background-image: url('https://itnewstoday.net/wp-content/uploads/2022/05/automatic-transmission-problem-troubleshooting-9-scaled.jpg');" >

<a class="link-div" href="https://itnewstoday.net/ko/%ec%9e%90%eb%8f%99-%eb%b3%80%ec%86%8d%ea%b8%b0-%eb%ac%b8%ec%a0%9c%eb%a5%bc-%ec%b2%98%eb%a6%ac%ed%95%98%eb%8a%94-%eb%b0%a9%eb%b2%95%ec%9d%80-%eb%ac%b4%ec%97%87%ec%9e%85%eb%8b%88%ea%b9%8c/"></a>

<div class="mg-blog-inner">

<div class="mg-blog-category">

<a class="newsup-categories category-color-1" href="https://itnewstoday.net/category/ko/" alt="View all posts in Korean">

Korean

</a> </div>

<h4 class="title"> <a href="https://itnewstoday.net/ko/%ec%9e%90%eb%8f%99-%eb%b3%80%ec%86%8d%ea%b8%b0-%eb%ac%b8%ec%a0%9c%eb%a5%bc-%ec%b2%98%eb%a6%ac%ed%95%98%eb%8a%94-%eb%b0%a9%eb%b2%95%ec%9d%80-%eb%ac%b4%ec%97%87%ec%9e%85%eb%8b%88%ea%b9%8c/" title="Permalink to: 자동 변속기 문제를 처리하는 방법은 무엇입니까?"> 자동 변속기 문제를 처리하는 방법은 무엇입니까?</a> </h4>

<div class="mg-blog-meta">

<span class="mg-blog-date"><i class="fas fa-clock"></i>

<a href="https://itnewstoday.net/2022/05/">

May 14, 2022</a></span>

<a class="auth" href="https://itnewstoday.net/author/johnfuller/"><i class="fas fa-user-circle"></i>

John Fuller</a>

</div>

</div>

</div>

</div>

<!--/col-md-3-->

</div>

</div>

</div>

<!--==================== FOOTER AREA ====================-->

<footer>

<div class="overlay" style="background-color: ;">

<!--Start mg-footer-widget-area-->

<!--End mg-footer-widget-area-->

<!--Start mg-footer-widget-area-->

<div class="mg-footer-bottom-area">

<div class="container-fluid">

<div class="divide-line"></div>

<div class="row align-items-center">

<!--col-md-4-->

<div class="col-md-6">

<div class="site-branding-text">

<h1 class="site-title"> <a href="https://itnewstoday.net/" rel="home">IT News Today</a></h1>

<p class="site-description"></p>

</div>

</div>

<div class="col-md-6 text-right text-xs">

<ul class="mg-social">

<a target="_blank" href="">

<a target="_blank" href="">

</ul>

</div>

<!--/col-md-4-->

</div>

<!--/row-->

</div>

<!--/container-->

</div>

<!--End mg-footer-widget-area-->

<div class="mg-footer-copyright">

<div class="container-fluid">

<div class="row">

<div class="col-md-6 text-xs">

<p>

<a href="https://wordpress.org/">

Proudly powered by WordPress </a>

<span class="sep"> | </span>

Theme: News Live by <a href="https://themeansar.com/" rel="designer">Themeansar</a>. </p>

</div>

<div class="col-md-6 text-right text-xs">

<ul class="info-right"><li class="nav-item menu-item "><a class="nav-link " href="https://itnewstoday.net/" title="Home">Home</a></li><li class="nav-item menu-item page_item dropdown page-item-10"><a class="nav-link" href="https://itnewstoday.net/contact-us/">Contact Us</a></li><li class="nav-item menu-item page_item dropdown page-item-3"><a class="nav-link" href="https://itnewstoday.net/privacy-policy/">Privacy Policy</a></li></ul>

</div>

</div>

</div>

</div>

</div>

<!--/overlay-->

</footer>

<!--/footer-->

</div>

<!--/wrapper-->

<!--Scroll To Top-->

<a href="#" class="ta_upscr bounceInup animated"><i class="fa fa-angle-up"></i></a>

<!--/Scroll To Top-->

<!-- /Scroll To Top -->

<!-- Start of StatCounter Code -->

<script>

<!--

var sc_project=12428259;

var sc_security="45e0a9ca";

var sc_invisible=1;

var scJsHost = (("https:" == document.location.protocol) ?

"https://secure." : "http://www.");

//-->

</script>

<script type="text/javascript"

src="https://secure.statcounter.com/counter/counter.js"

async></script> <noscript><div class="statcounter"><a title="web analytics" href="https://statcounter.com/"><img class="statcounter" src="https://c.statcounter.com/12428259/0/45e0a9ca/1/" alt="web analytics" /></a></div></noscript>

<!-- End of StatCounter Code -->

<script>

jQuery('a,input').bind('focus', function() {

if(!jQuery(this).closest(".menu-item").length && ( jQuery(window).width() <= 992) ) {

jQuery('.navbar-collapse').removeClass('show');

}})

</script>

<link rel='stylesheet' id='yarppRelatedCss-css' href='https://itnewstoday.net/wp-content/plugins/yet-another-related-posts-plugin/style/related.css?ver=5.30.10' type='text/css' media='all' />

<script type='text/javascript' src='https://itnewstoday.net/wp-content/themes/newsup/js/custom.js?ver=6.3.4' id='newsup-custom-js'></script>

<script type='text/javascript' src='https://itnewstoday.net/wp-content/themes/newsup/js/custom-time.js?ver=6.3.4' id='newsup-custom-time-js'></script>

<script>

/(trident|msie)/i.test(navigator.userAgent)&&document.getElementById&&window.addEventListener&&window.addEventListener("hashchange",function(){var t,e=location.hash.substring(1);/^[A-z0-9_-]+$/.test(e)&&(t=document.getElementById(e))&&(/^(?:a|select|input|button|textarea)$/i.test(t.tagName)||(t.tabIndex=-1),t.focus())},!1);

</script>

</body>

</html>